基于Spark的电信客户流失数据分析系统_毕设

舟率率 3/28/2026 springbootredissqoopjavavue

# 项目概况

# 数据类型

电信客户流失数据

# 开发环境

centos7

# 软件版本

hadoop3.2.0、hive3.1.2、spark3.1.2、mysql5.7.38、jdk8

# 开发语言

Java、shell、SQL

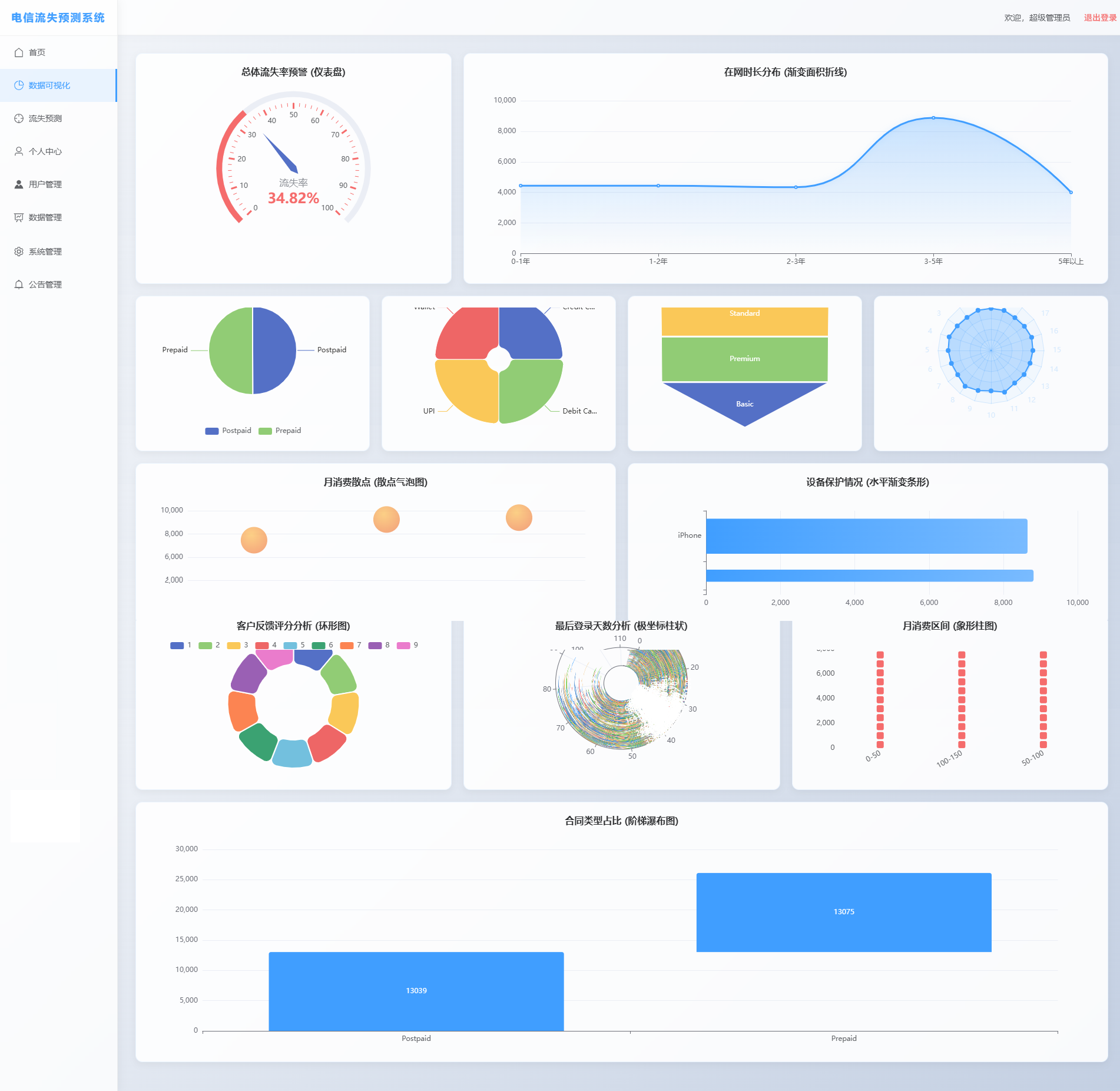

# 可视化图表

# 操作步骤

# 启动MySQL

# 查看mysql是否启动 启动命令: systemctl start mysqld.service

systemctl status mysqld.service

# 进入mysql终端

# MySQL的用户名:root 密码:123456

# MySQL的用户名:root 密码:123456

# MySQL的用户名:root 密码:123456

mysql -uroot -p123456

1

2

3

4

5

6

7

8

9

2

3

4

5

6

7

8

9

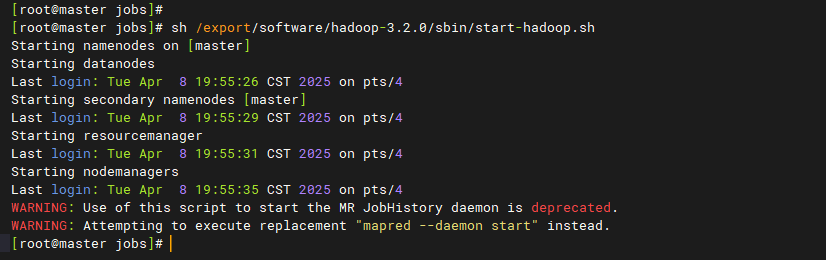

# 启动Hadoop

# 离开安全模式: hdfs dfsadmin -safemode leave

# 启动hadoop

bash /export/software/hadoop-3.2.0/sbin/start-hadoop.sh

1

2

3

4

5

2

3

4

5

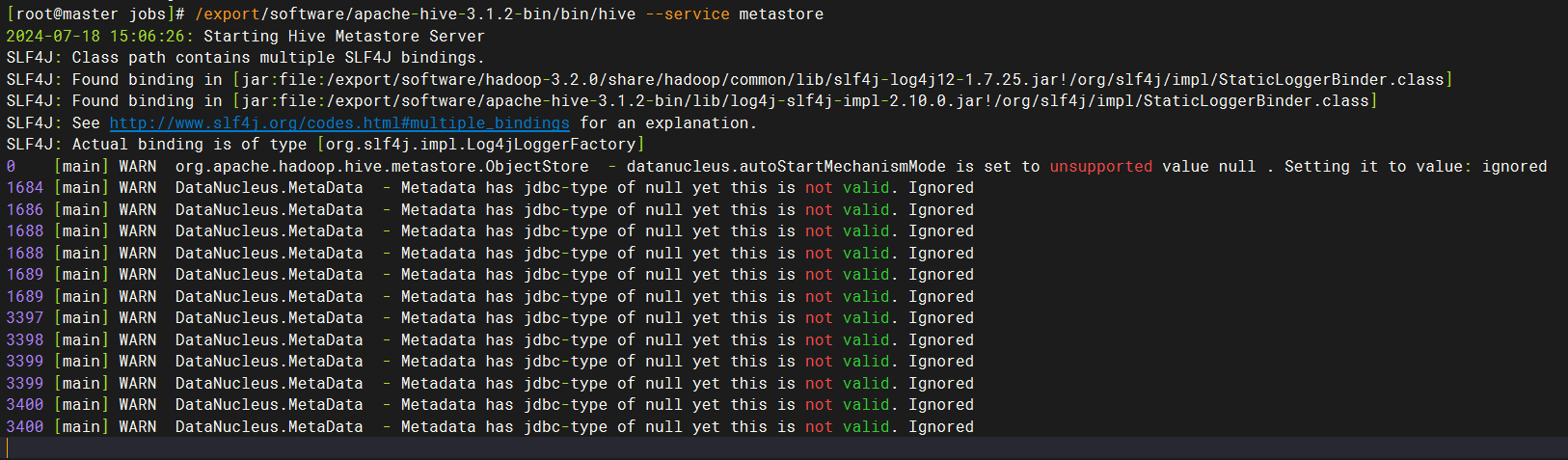

# 启动hive

# 在第一个窗口中,执行后等待10-20秒

/export/software/apache-hive-3.1.2-bin/bin/hive --service metastore

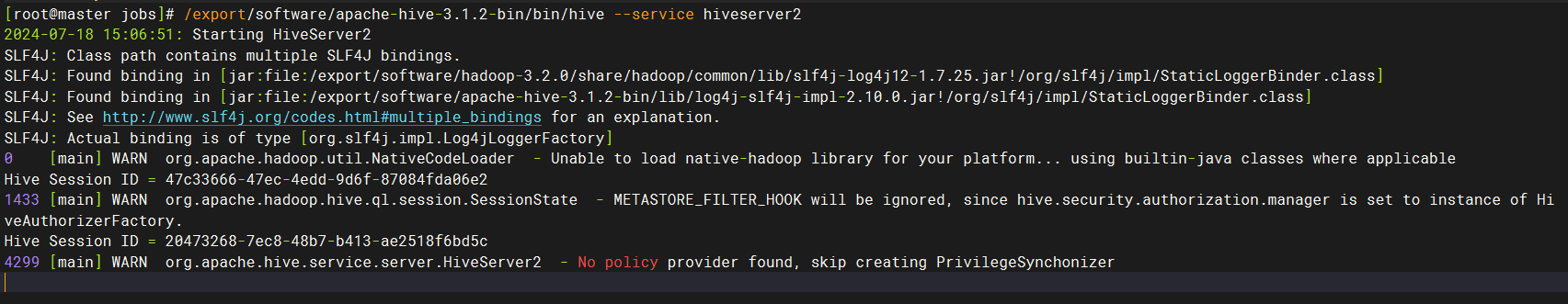

# 在第二个窗口中,执行后等待10-20秒

/export/software/apache-hive-3.1.2-bin/bin/hive --service hiveserver2

# 连接进入hive终端命令如下:

# /export/software/apache-hive-3.1.2-bin/bin/beeline -u jdbc:hive2://master:10000 -n root

1

2

3

4

5

6

7

8

9

10

2

3

4

5

6

7

8

9

10

# 准备目录

mkdir -p /data/jobs/project/

cd /data/jobs/project/

# 解压 "project-spark-telecom-customer-churn-data-analysis-system/" 目录下的 "data.7z" 到当前目录下

# 上传 "project-spark-telecom-customer-churn-data-analysis-system" 整个文件夹 到 "/data/jobs/project/" 目录

1

2

3

4

5

6

7

2

3

4

5

6

7

# 初始化MySQL表

cd /data/jobs/project/project-spark-telecom-customer-churn-data-analysis-system/mysql/

mysql -uroot -p123456 < mysql.sql

1

2

3

4

5

2

3

4

5

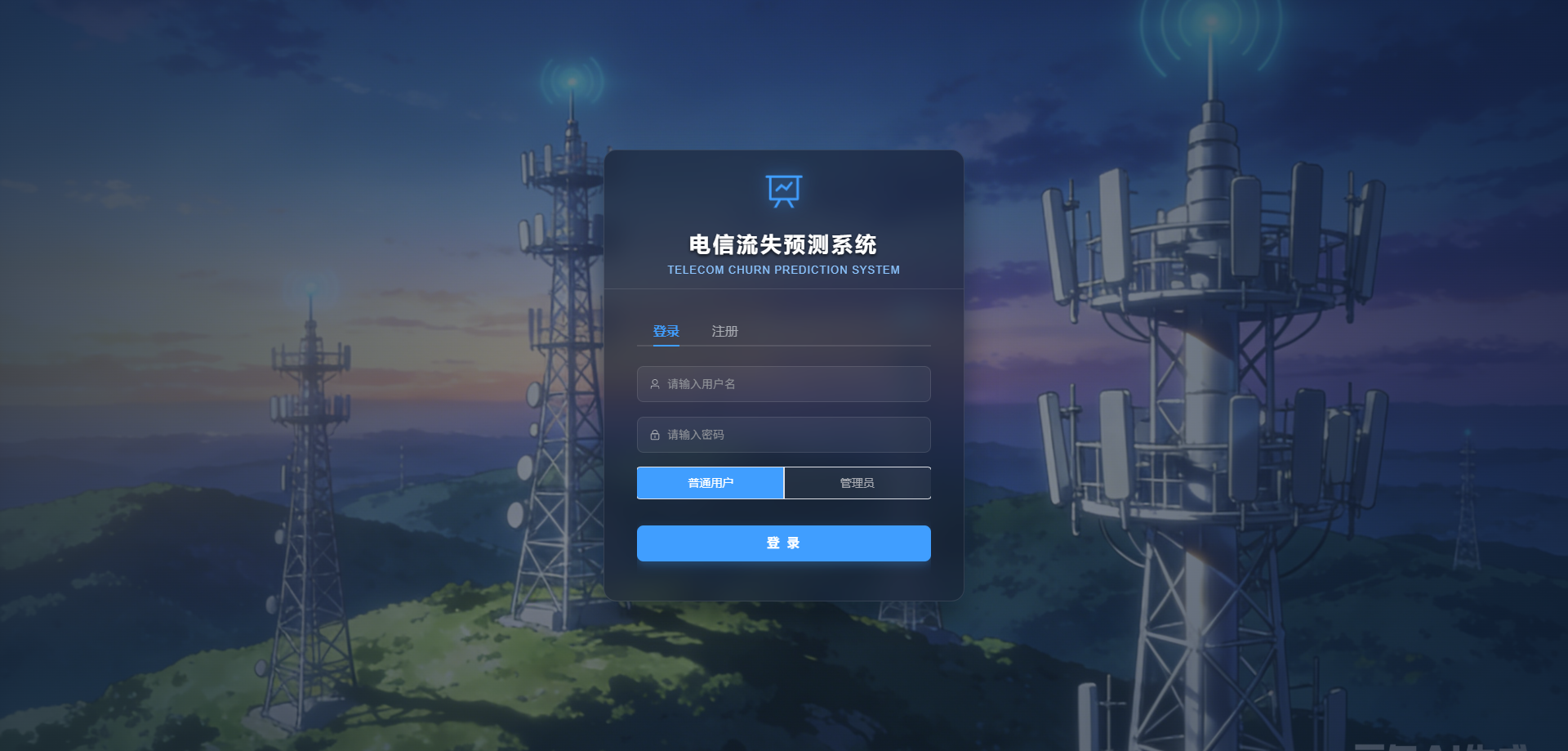

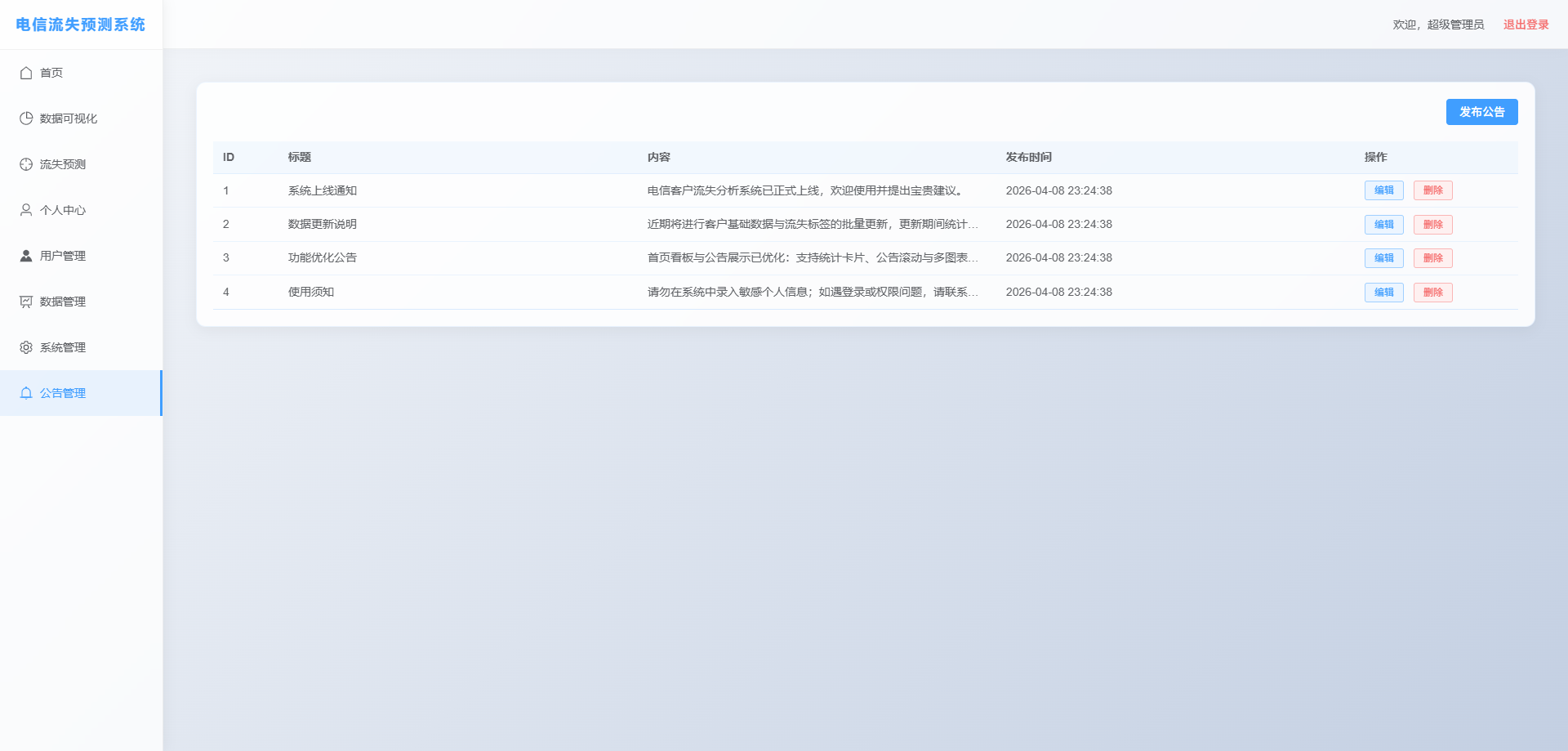

# 启动前端

cd /data/jobs/project/project-spark-telecom-customer-churn-data-analysis-system/前端/front_ui/

# 安装node

npm install --registry=https://registry.npmmirror.com

chmod -R 755 node_modules/.bin

npm run dev

# http://master:8080/login

# 登录用户: admin

# 登录密码: 123456

1

2

3

4

5

6

7

8

9

10

11

2

3

4

5

6

7

8

9

10

11

# 启动后端

cd /data/jobs/project/project-spark-telecom-customer-churn-data-analysis-system/后端/springboot-demo/

mvn clean package -DskipTests

java -jar target/springboot-demo-1.0-SNAPSHOT.jar --model.path=/data/output/model --app.fileBaseDir=/data/jobs/project/project-spark-telecom-customer-churn-data-analysis-system

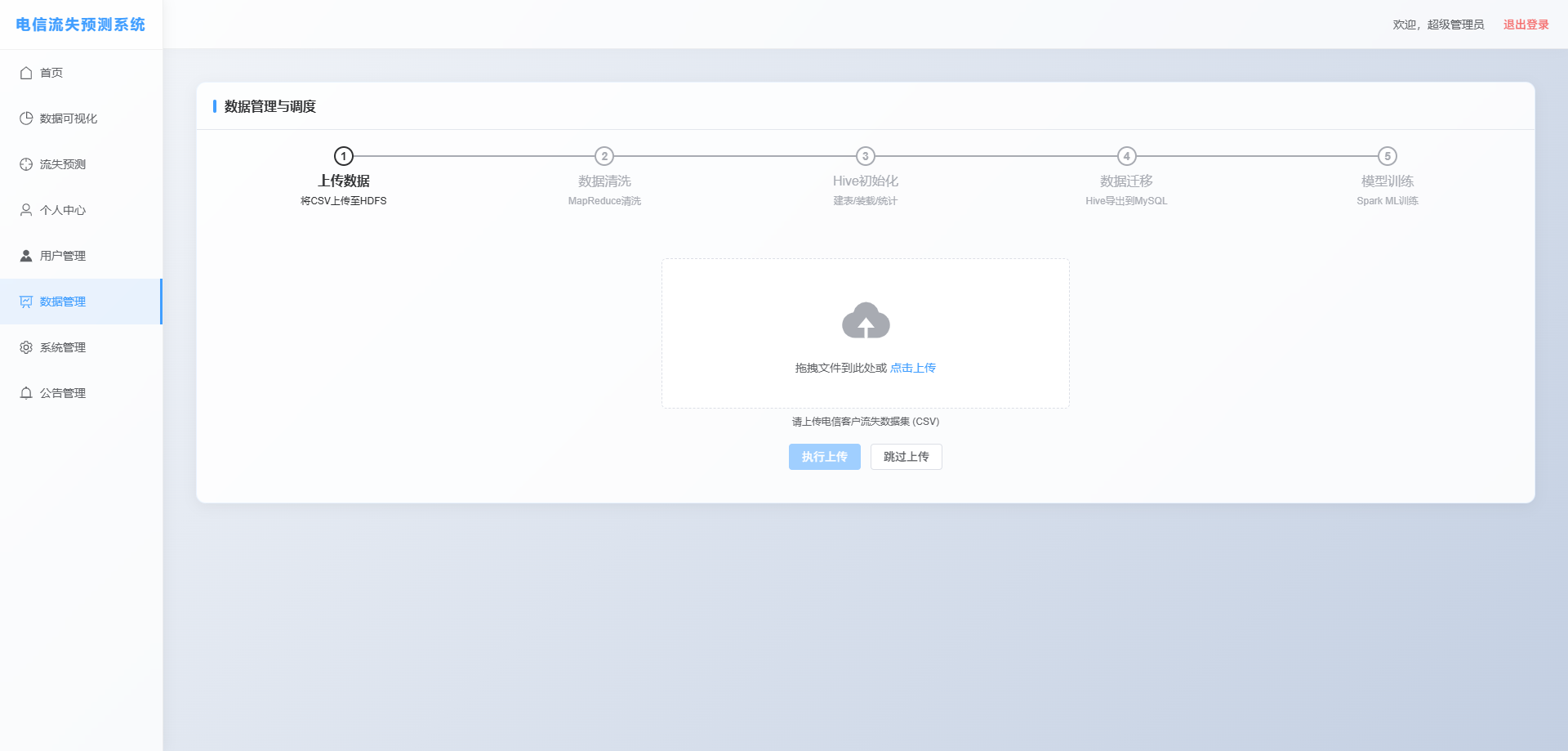

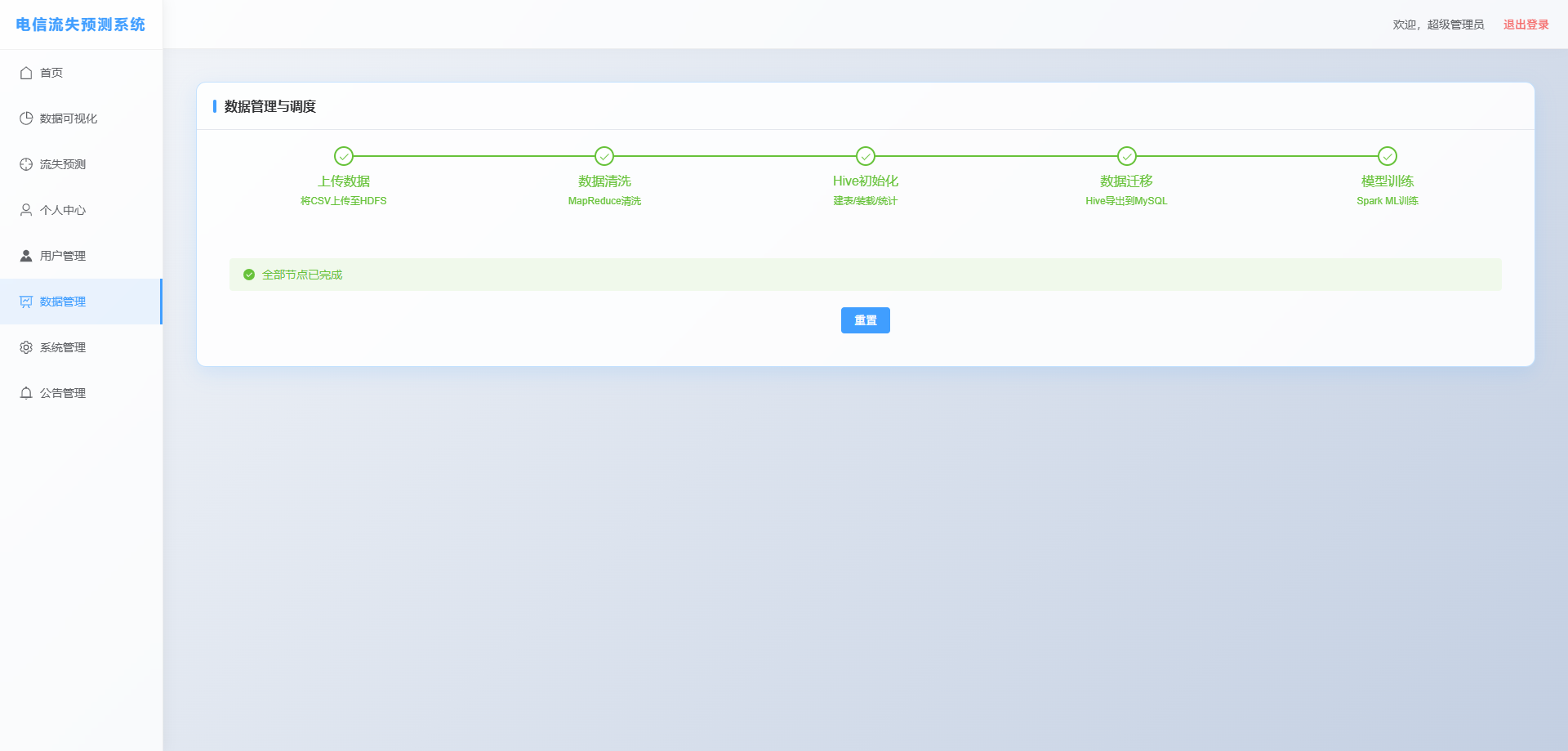

# 启动项目后,在"数据清洗"完成后,可以选择手动训练模型

cd /data/jobs/project/project-spark-telecom-customer-churn-data-analysis-system/spark_ml/spark-job/

# mvn clean package -DskipTests

# bash /export/software/spark-3.1.2-bin-hadoop3.2/sbin/start-all.sh

# spark-submit --master yarn --deploy-mode cluster --class org.example.ChurnModelTrain target/spark-job.jar /data/input/ /data/output/model/

1

2

3

4

5

6

7

8

9

10

11

12

2

3

4

5

6

7

8

9

10

11

12